Creating Smart AI Agents is Essential

Welcome to the era of the Agentic Revolution.

If 2024 marked the era of “RAG” (Retrieval-Augmented Generation) and 2025 represented the age of “Agentic Frameworks,” then 2026 is undeniably the year of the Autonomous Orchestrator.

We have advanced beyond basic prompt-response interactions. In the current environment, companies seek a bot that not only communicates but also one that processes information, takes action, and self-corrects.

This is where LangGraph excels. By 2026, it has established itself as the industry benchmark for creating stateful, multi-actor AI systems that not only adhere to a script but also address challenges.

What is LangGraph? (The 2026 Outlook)

In the initial stages of generative AI, we regarded LLMs as a vending machine: you input a prompt, and you receive a reply.

However, real-life tasks—such as reviewing a financial statement or overseeing a worldwide supply chain—are repetitive. They need loops, repetitions, and continuous state modifications.

LangGraph is a library developed on LangChain that is specifically intended for creating stateful, multi-agent applications through a graph-oriented framework.

The Importance of Graphs Today

Standard AI workflows are “Directed Acyclic Graphs” (DAGs), indicating that data progresses in a single direction without reverting.

LangGraph presents Cycles. This enables an agent to:

- Consider: “Did my previous inquiry truly address the user’s query?”

- Correct: “Hold on, that API request was unsuccessful.” “Allow me to use another search term.”

- Loop: “I must continue this process until the produced code successfully passes all unit tests.”

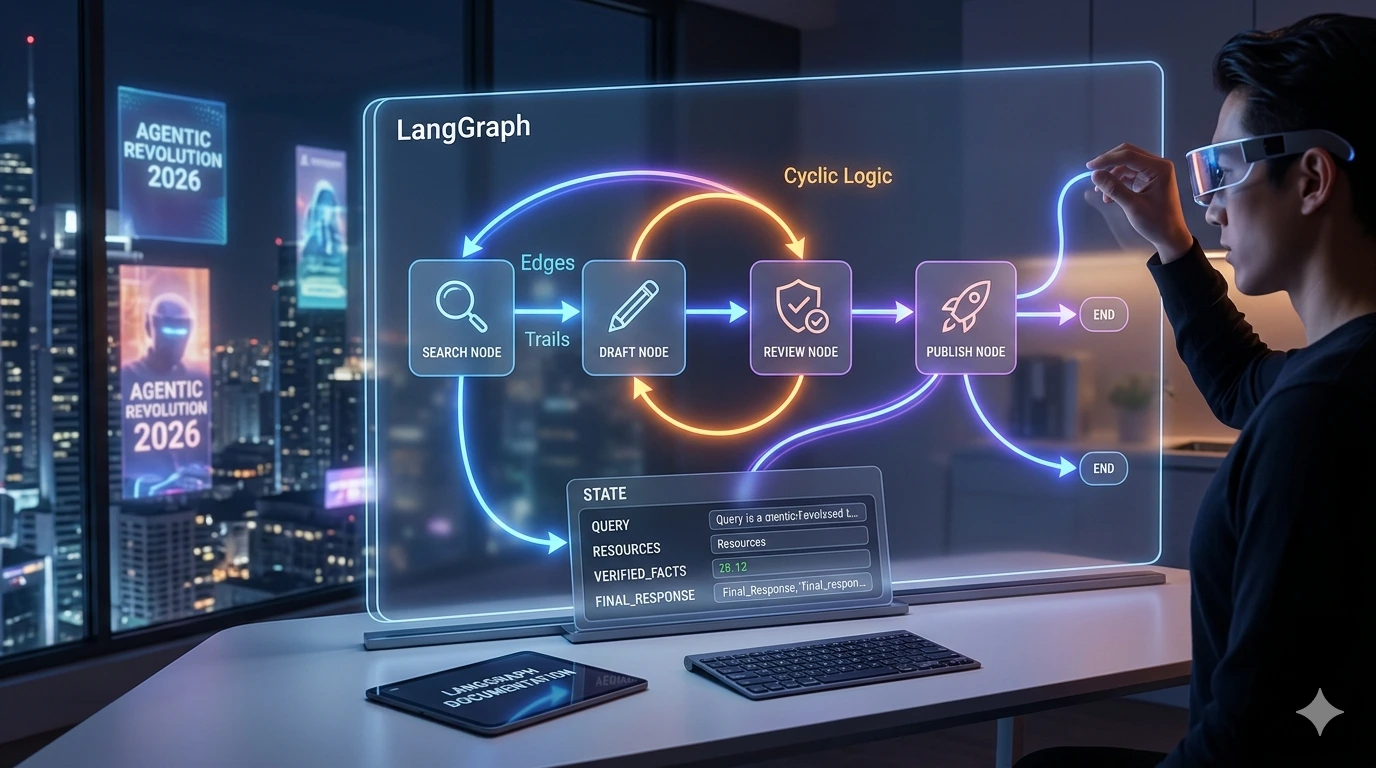

Imagining the Structure: Nodes, Connections, and Condition

To excel in LangGraph by 2026, it is essential to grasp three key pillars.

Imagine it as a commercial kitchen:

1. The State (The “Order Ticket”)

In 2026, we use Typed State. This is a shared data structure (like a Python TypedDict) that every node in your graph can read from and write to. It’s the “source of truth” that persists across the entire conversation.

2. Nodes (The “Specialized Chefs”)

Nodes are Python functions. Each node represents a specific “worker.” One node might be the “Researcher,” another the “Fact-Checker,” and a third the “Head Chef” who compiles the final response.

3. Edges (The “Expeditor”)

Edges connect your nodes.

-

Normal Edges: “After the Researcher is done, always go to the Fact-Checker.”

-

Conditional Edges: This is the brain. The agent looks at the current

Stateand decides: “If the facts are verified, go to the Writer. If not, go back to the Researcher.”

Watch & Learn: The Ultimate 2026 LangGraph Masterclass

If you prefer a visual walkthrough, we highly recommend this comprehensive tutorial from Wizard Engineer, which covers everything from basic setup to advanced multi-agent patterns in 2026.

Video Title: Complete LangGraph Tutorial Beginner To Advance 2026 | RAG-Full-Course

Key Video Highlights:

-

[01:16] – What is LangGraph? A deep dive into stateful agents and graph-based logic.

-

[09:12] – Building Your First Graph: Step-by-step instructions for beginners.

-

[53:14] – Corrective RAG Pipeline: Learn how to build a system that fixes its own retrieval errors.

-

[01:53:55] – Multi-Agent Systems: How to use the “Handoff Pattern” to pass tasks between specialized bots.

LangChain vs. LangGraph: The 2026 Comparison

Many beginners ask, “Do I still need LangChain?” The answer is yes. LangChain provides the tools (connectors, prompts, models), while LangGraph provides the logic (the workflow).

| Feature | LangChain (Standard) | LangGraph (2026) |

| Logic Flow | Linear / Sequential | Cyclic / Looping |

| State Management | Hard to maintain manually | Native, persistent “State” object |

| Error Handling | Usually fails on first error | Can loop back and self-correct |

| Human Approval | Complex to implement | Native “Human-in-the-Loop” support |

| Best For | Simple RAG, Summaries | Complex Agents, Coding Assistants |

Real-World Example: The “Fintech Audit” Agent

Imagine a 2026 AI system designed to audit company expenses. It doesn’t just scan receipts; it cross-references them with tax laws and asks for human clarification when a “gray area” is found.

Interaction Chat Table

| Step | Actor | Action | State Update |

| 1 | User | Uploads $5,000 “Dinner” receipt. | receipt_value: 5000 |

| 2 | Researcher Node | Checks 2026 IRS meal deduction limits. | limit: 500 / person |

| 3 | Logic Edge | Detects discrepancy ($5,000 vs $500). | status: "flagged" |

| 4 | Human Node | [PAUSED] Admin: “Was this a large event?” | admin_note: "100 guests" |

| 5 | Audit Node | Recalculates: 100 * 50 = $5,000. OK. | status: "approved" |

| 6 | Final Node | “Expense approved. Event details logged.” | final_report: "..." |

Advanced 2026 Feature: “Time Travel” Debugging

One of the most revolutionary updates in LangGraph is Checkpointing. Since the state is saved at every node, you can literally “travel back in time.”

If your agent made a logic error at Step 10, you don’t have to restart the whole process. In 2026, you can:

-

View the state at Step 9.

-

Manually edit the “wrong” thought the AI had.

-

Resume the execution from that point.

This has reduced AI development costs by nearly 60% because it eliminates the need to pay for repetitive LLM calls during the testing phase.

How to Build Your First Agent (The 2026 Setup)

1. Installation

Ensure you have the latest 2026 libraries:

pip install -U langgraph langchain_openai langsmith

2. Define the State

from typing import Annotated, TypedDict

from langgraph.graph.message import add_messages

class MyState(TypedDict):

# This automatically appends new messages to history

messages: Annotated[list, add_messages]

3. Compile the Graph

The compilation step turns your Python logic into a “Runnable” that can be deployed to the cloud with one click.

Pro Tip: Always use LangSmith for tracing. In 2026, if you aren’t tracing your graphs, you are flying blind.

10 Frequently Asked Questions (FAQs)

1. Is LangGraph free?

The library is open-source (MIT License). You only pay for the tokens used by your chosen LLM (like GPT-5 or Gemini 3).

2. Can I use local models?

Yes. In 2026, many developers use Ollama to run LangGraph nodes locally for 100% data privacy.

3. What is the “Recursion Limit”?

It’s a safety setting. If your agent gets stuck in an infinite loop, the recursion limit (default 25) will kill the process to save you money.

4. Does it work with JavaScript?

Yes, langgraphjs is fully supported for Web and Node.js developers.

5. What is a “Supervised” Multi-Agent system?

It’s where one “Manager Agent” node decides which “Worker Agent” node should handle the next task.

6. Can I stop an agent to ask for permission?

Yes! This is called a Breakpoint. It’s essential for agents that handle payments or send emails.

7. How do I handle thousands of users?

Each user session is assigned a thread_id. LangGraph keeps their states completely separate.

8. Is LangGraph faster than standard LangChain?

It isn’t necessarily “faster,” but it is much more reliable, which is more important for production apps.

9. Can I use multiple different LLMs in one graph?

Yes. You can use a cheap model for “Summarization” nodes and an expensive, smart model for “Decision” nodes.

10. Where is the best place to find real examples?

Check the Official LangGraph GitHub for the latest 2026 templates.

You can also check this out.

Best Ways to Write Better Prompts for ChatGPT (Complete Guide for Beginners and Pros)

Summary

LangGraph is the bridge between “AI that talks” and “AI that does.” By mastering Nodes, Edges, and State, you are not just writing code; you are building a digital brain. In 2026, the most successful developers aren’t those with the best prompts, but those who can architect the most resilient workflows.

Start small: build a simple chatbot that can search the web and fact-check itself. Once you see the power of a Cycle, you’ll never go back to linear chains again.